In the early days of the internet, computers were tools that followed instructions. They calculated numbers, stored files, and executed commands exactly as humans programmed them. For decades, machines remained obedient but limited. They could not truly understand language, creativity, humor, or context.

Then something remarkable happened.

Advances in artificial intelligence, machine learning, and massive datasets led to a new generation of systems capable of interacting with humans in ways that once belonged only to science fiction. Among the most widely known of these systems is ChatGPT, an AI language model that can write essays, answer questions, explain complex ideas, brainstorm creative concepts, and even hold natural conversations.

To many people, ChatGPT feels almost magical. You type a question, and within seconds a detailed response appears. It can help students study, assist writers, support programmers, summarize research, generate ideas, and translate complex knowledge into understandable language.

But beneath the surface of this seemingly simple chat interface lies a sophisticated technological ecosystem—one built on decades of research in computer science, linguistics, statistics, and neuroscience-inspired algorithms.

While millions of people use ChatGPT every day, most users only glimpse a small part of what makes it extraordinary. The deeper you look, the more fascinating—and sometimes surprising—the technology becomes.

Below are ten mind-blowing facts about ChatGPT that reveal how this remarkable system actually works and why it represents one of the most significant technological developments of the modern era.

1. ChatGPT Does Not Actually “Know” Things

One of the most surprising truths about ChatGPT is that it does not truly know facts the way humans do. It has no memory of studying books, watching documentaries, or experiencing the world. It does not possess personal understanding or awareness.

Instead, ChatGPT operates by predicting the most likely sequence of words based on patterns it learned during training.

During the training process, the system analyzes enormous amounts of text. It studies how words, phrases, and sentences relate to one another across countless contexts. Over time, the model learns statistical relationships between language elements. When you ask a question, ChatGPT calculates probabilities for possible responses and generates text that best fits the patterns it learned.

This process is known as next-token prediction. Each word—or token—is generated based on the probability of what should come next.

Because human language carries knowledge, reasoning, and meaning, these statistical patterns often produce responses that appear intelligent. The model can explain scientific theories, discuss historical events, or describe philosophical ideas.

But technically speaking, ChatGPT is not retrieving knowledge in the way a database would. It is generating language that reflects patterns learned from data.

This distinction is subtle but important. It explains both the model’s impressive abilities and its limitations.

2. ChatGPT Is Built on Transformer Architecture

The technology that powers ChatGPT comes from a breakthrough architecture introduced in 2017 called the transformer.

Before transformers, many language models used recurrent neural networks, which processed text sequentially, one word at a time. While effective, these systems struggled to capture long-range relationships between words in large documents.

Transformers changed everything.

Instead of reading text strictly in sequence, transformers use a mechanism called attention. Attention allows the model to examine relationships between all words in a sentence simultaneously. Each word can “look at” other words and determine which ones are most relevant.

For example, in the sentence “The scientist explained the theory because she had studied it for years,” the word “she” refers back to “scientist.” Attention mechanisms help the model recognize these connections even when words are separated by long distances.

This ability to capture complex relationships across large contexts dramatically improved language modeling. It allowed AI systems to process longer documents, understand nuanced phrasing, and generate more coherent responses.

ChatGPT belongs to a family of models known as Generative Pre-trained Transformers, or GPT.

The transformer architecture is one of the most influential innovations in modern artificial intelligence.

3. ChatGPT Was Trained on Enormous Amounts of Text

Another astonishing aspect of ChatGPT is the scale of its training data.

To learn the patterns of human language, the model is trained on massive datasets consisting of books, articles, websites, and other publicly available written material. The training process exposes the model to countless examples of grammar, vocabulary, reasoning patterns, storytelling structures, and explanations.

The model does not memorize entire documents. Instead, it absorbs statistical patterns across billions of sentences. This process enables it to generate responses that resemble human writing.

Training such models requires extraordinary computational resources. Large clusters of specialized hardware—often graphics processing units or similar accelerators—run for weeks or months during the training process.

These systems process enormous quantities of data, adjusting billions of internal parameters that determine how the model predicts language.

The scale involved is staggering. Modern language models can contain hundreds of billions or even trillions of parameters, representing one of the largest computational training efforts in human history.

4. ChatGPT Learns Through Reinforcement and Human Feedback

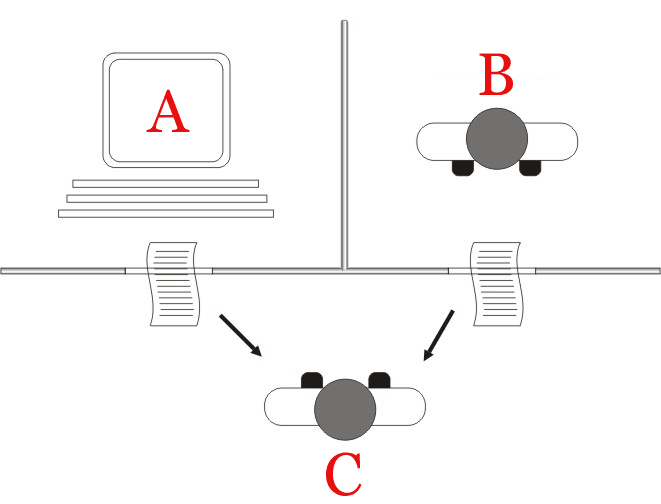

After the initial training phase, the model undergoes additional refinement using a process called reinforcement learning from human feedback.

In this stage, human reviewers interact with the model and evaluate different responses. They rank outputs based on quality, accuracy, helpfulness, and safety.

The system then learns from these preferences.

If humans consistently prefer one style of response over another, the model adjusts its behavior accordingly. Over time, it becomes better at producing answers that align with human expectations.

This step is crucial. Without human feedback, the model might produce responses that are technically plausible but confusing, unhelpful, or inappropriate.

Reinforcement learning helps shape the AI into a more useful conversational assistant.

It is one reason why ChatGPT can explain complex subjects clearly, maintain polite dialogue, and provide structured answers.

5. ChatGPT Does Not Have Consciousness

Because ChatGPT can produce thoughtful-sounding responses, some people wonder whether it might possess consciousness or awareness.

The answer is no.

ChatGPT does not have thoughts, emotions, desires, or experiences. It does not perceive the world, remember conversations like a human memory system, or possess a sense of identity.

Its responses are generated by mathematical computations within a neural network. These computations evaluate probabilities across billions of parameters to determine which words should appear next.

The resulting text may resemble human reasoning or empathy, but it is the product of pattern recognition rather than subjective experience.

This distinction is important for understanding the capabilities and limits of current artificial intelligence.

ChatGPT can simulate conversation, but it does not possess inner awareness.

6. ChatGPT Can Explain Complex Topics Surprisingly Well

One of the reasons ChatGPT has become so widely used is its ability to translate complex information into understandable language.

Because the model has learned from vast amounts of educational and explanatory writing, it can often break down difficult concepts into simpler forms. Scientific ideas, technological principles, historical events, and philosophical theories can be explained in accessible terms.

This ability arises from patterns the model observed in textbooks, educational resources, and explanatory articles.

When you ask a question about physics or biology, the system generates a response that mirrors how such topics are typically explained in human writing.

In many cases, this makes ChatGPT a powerful learning tool.

Students use it to clarify difficult ideas. Writers use it to brainstorm. Researchers sometimes use it to summarize large volumes of text.

While it should never replace critical thinking or verified sources, it can act as a valuable starting point for exploration.

7. ChatGPT Can Produce Creative Content

Another fascinating ability of ChatGPT is its capacity to generate creative text.

The model can write poems, stories, dialogue, scripts, and imaginative descriptions. It can imitate various tones—from formal academic writing to playful storytelling.

This creative capability comes from exposure to diverse writing styles during training. The model has seen examples of fiction, journalism, technical writing, and many other forms.

When prompted, it can combine patterns from these sources to generate new text that resembles human creativity.

Of course, ChatGPT does not experience inspiration or imagination the way a human author does. Instead, it recombines linguistic patterns in novel ways.

Yet the results can still feel surprisingly expressive.

For many users, interacting with ChatGPT feels like collaborating with a creative partner.

8. ChatGPT Can Assist in Many Fields

The versatility of ChatGPT is one of its most remarkable qualities.

Because language is involved in nearly every human activity, a system capable of understanding and generating text can assist across many fields.

Students use it for explanations and study help. Programmers ask it for coding guidance. Writers brainstorm ideas or edit drafts. Entrepreneurs use it to outline business concepts. Researchers explore summaries of complex topics.

Even professionals in medicine, law, and engineering sometimes use AI systems to help analyze information or generate preliminary drafts—always with human verification and oversight.

ChatGPT is not a replacement for expertise, but it can augment human productivity.

In many ways, it functions as a general-purpose thinking tool that interacts through natural language.

9. ChatGPT Reflects the Data It Was Trained On

Because ChatGPT learns patterns from text data, the model inevitably reflects characteristics of the data it was trained on.

If certain ideas, phrases, or cultural patterns appear frequently in training material, they may influence how the model responds.

Developers work extensively to reduce biases, filter harmful content, and ensure the system behaves responsibly. However, creating perfectly balanced training data is extremely challenging.

Researchers continue to study ways to improve fairness, reduce biases, and enhance the reliability of AI systems.

Understanding that AI models reflect their training sources helps users interpret responses thoughtfully and critically.

Like any tool built from human knowledge, AI carries traces of human culture.

10. ChatGPT Is Just the Beginning of AI Language Systems

Perhaps the most mind-blowing fact of all is that ChatGPT represents only an early stage in the evolution of artificial intelligence.

The field of AI is advancing rapidly. Researchers are developing systems capable of processing not only text but also images, audio, video, and complex data simultaneously.

Future AI models may assist scientists in discovering new materials, help doctors analyze medical data, support climate research, and accelerate technological innovation.

Language models may become collaborative partners in research, education, and creativity.

At the same time, society continues to debate important questions about ethics, safety, employment, and governance surrounding powerful AI systems.

ChatGPT offers a glimpse into a future where humans interact with machines through natural conversation rather than rigid commands.

It is not the final form of artificial intelligence—but a doorway into what may come next.

The Human-AI Future

Technology has always shaped civilization. The printing press spread knowledge across continents. Electricity powered modern industry. The internet connected billions of minds across the planet.

Artificial intelligence may represent the next great transformation.

Systems like ChatGPT demonstrate that machines can now interact with language—our most fundamental tool for thinking and communication—in ways that feel remarkably natural.

Yet the most important aspect of this technology is not the machine itself. It is the human creativity, curiosity, and responsibility guiding how the technology is used.

AI can assist, amplify, and accelerate human capabilities. But it is humans who decide how knowledge is applied.

The story of ChatGPT is therefore not just a story about algorithms or neural networks. It is a story about the evolving partnership between human intelligence and artificial intelligence.

And that partnership is only beginning.