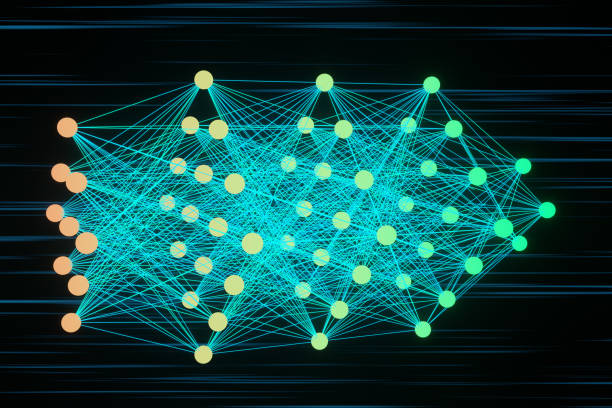

Neural networks are one of the most transformative scientific and technological ideas of the modern era. They sit at the intersection of mathematics, computer science, neuroscience, and engineering. They power voice assistants, recommend what you watch next, help doctors analyze medical images, and even generate human-like language. Yet beneath their astonishing capabilities lies a surprisingly elegant idea inspired by biology: systems of interconnected units that learn from experience.

A neural network is a computational model made up of layers of artificial neurons. Each artificial neuron receives inputs, processes them through mathematical operations, and passes an output forward. Through training on data, the network adjusts internal parameters—called weights—so that it improves its performance on tasks such as recognizing images, translating languages, or predicting patterns.

But neural networks are not just technical tools. They represent a profound shift in how we think about intelligence itself. Instead of programming explicit rules for every situation, we design systems that learn patterns from examples. This shift from instruction to adaptation has reshaped technology and raised deep philosophical questions about cognition, creativity, and the nature of thought.

What follows are fifteen incredible facts about neural networks—each revealing a different dimension of their scientific depth and transformative power.

1. Neural Networks Are Inspired by the Human Brain—But They Are Not the Brain

The very term “neural network” comes from biology. In the human brain, billions of neurons communicate through electrical and chemical signals. Each neuron receives inputs from other neurons through dendrites, processes them in the cell body, and sends outputs along axons. The connections between neurons, called synapses, strengthen or weaken over time, forming the basis of learning and memory.

Artificial neural networks borrow this conceptual structure. They consist of nodes, often called artificial neurons, connected by weighted links. Inputs are multiplied by weights, summed together, and passed through an activation function that determines the output.

Yet the similarity ends there. Biological neurons are vastly more complex than artificial ones. Real neurons operate through electrochemical processes, exhibit dynamic temporal behavior, and form extraordinarily intricate networks. Artificial neural networks are simplified mathematical abstractions. They capture the idea of interconnected processing units, but they do not replicate the full richness of biological brains.

Still, the inspiration from neuroscience remains powerful. The idea that intelligence can emerge from networks of simple units connected together was revolutionary—and it continues to shape research in both artificial intelligence and cognitive science.

2. The Concept Dates Back to the 1940s

Neural networks may feel modern, but their theoretical foundations reach back to the 1940s. In 1943, Warren McCulloch and Walter Pitts introduced a mathematical model of a neuron. Their model was simple: a unit that receives binary inputs, sums them, and produces a binary output depending on whether a threshold is crossed.

This early abstraction laid the groundwork for computational neuroscience and artificial intelligence. It suggested that networks of such simplified neurons could, in principle, compute logical functions.

Later, in 1958, Frank Rosenblatt developed the perceptron, one of the earliest trainable neural network models. The perceptron could learn to classify inputs into categories by adjusting weights based on errors. It was a milestone, though limited to linearly separable problems.

These early developments showed that learning machines were possible—but computational limitations and theoretical challenges led to periods of reduced interest, often called “AI winters.” Only decades later, with more data and vastly greater computing power, did neural networks achieve their explosive resurgence.

3. They Learn Through Adjusting Weights Using Mathematical Optimization

At the heart of neural networks lies learning. Unlike traditional programs that follow fixed instructions, neural networks modify their internal parameters in response to data.

The process typically involves a method called gradient descent. When a neural network makes a prediction, the difference between the predicted output and the correct answer is measured by a loss function. The network then calculates how much each weight contributed to the error. Using a method known as backpropagation, it adjusts the weights in the direction that reduces the loss.

Backpropagation efficiently computes gradients—partial derivatives that indicate how small changes in weights affect the overall error. This algorithm, refined in the 1980s, was crucial to enabling deep learning.

The mathematics underlying training is grounded in calculus and linear algebra. The network’s learning is essentially a process of navigating a high-dimensional landscape in search of lower error. Through repeated adjustments across many examples, it gradually improves.

What feels like intelligence emerges from iterative optimization.

4. Deep Learning Refers to Networks with Many Layers

The term “deep learning” refers to neural networks with multiple hidden layers between input and output. Each layer transforms the representation of data, extracting progressively more abstract features.

For example, in image recognition, early layers might detect edges and simple shapes. Middle layers might identify textures or patterns. Deeper layers may recognize objects such as faces or animals.

The depth of a network enables it to represent complex hierarchical relationships. However, deeper networks are harder to train due to issues such as vanishing or exploding gradients. Advances in activation functions, normalization techniques, and architecture design have made deep networks more stable and efficient.

Deep learning has revolutionized fields such as computer vision, speech recognition, and natural language processing, allowing systems to achieve or surpass human-level performance on certain tasks.

5. Neural Networks Excel at Pattern Recognition

One of the most extraordinary strengths of neural networks is their ability to detect patterns in large, complex datasets.

Humans are naturally good at recognizing patterns—faces in crowds, voices in noise, shapes in shadows. Neural networks extend this ability to digital data. They can learn patterns in pixel intensities, sound waves, text sequences, and even genomic data.

Convolutional neural networks, designed specifically for image data, exploit spatial structure by applying filters across images. Recurrent neural networks and transformer-based architectures handle sequential data, capturing relationships across time or context.

Pattern recognition is not magic. It arises from mathematical transformations and weight adjustments. But the scale at which neural networks perform this recognition—across millions or billions of parameters—is unprecedented.

They can find subtle correlations that are invisible to human perception.

6. Neural Networks Can Approximate Any Continuous Function

A profound theoretical result known as the universal approximation theorem states that a neural network with at least one hidden layer can approximate any continuous function on a bounded domain, given sufficient neurons.

This does not mean that training such a network is easy or efficient. But it means that neural networks are extraordinarily flexible. They are not limited to specific types of tasks; they are general-purpose function approximators.

This flexibility explains why neural networks are applied in fields ranging from physics simulations to financial forecasting. If a relationship between inputs and outputs exists, a neural network can, in principle, learn to approximate it.

The theorem underscores a remarkable truth: complexity can emerge from simple building blocks arranged in sufficient numbers.

7. They Require Vast Amounts of Data

One of the driving forces behind the success of neural networks is the availability of large datasets. Training deep networks often requires thousands, millions, or even billions of examples.

Why so much data? Because neural networks contain many parameters. A deep model may have millions or billions of weights. To estimate these reliably, abundant data is essential.

Data serves as experience. Just as humans refine skills through repeated exposure, neural networks improve by processing diverse examples. More data generally leads to better generalization, though diminishing returns and biases must be carefully managed.

The modern data-rich environment—fueled by the internet, sensors, and digital storage—has been crucial in enabling neural network breakthroughs.

8. Neural Networks Are Computationally Intensive

Training large neural networks demands enormous computational resources. Matrix multiplications involving high-dimensional arrays form the backbone of neural computation. Graphics processing units and specialized hardware such as tensor processing units accelerate these operations.

The scale can be staggering. Training cutting-edge models may require distributed systems spanning hundreds or thousands of processors. Energy consumption and environmental impact have become active areas of concern and research.

Despite this intensity, efficiency improvements continue. Techniques such as pruning, quantization, and knowledge distillation aim to reduce model size and computational demands without sacrificing performance.

The computational appetite of neural networks reflects both their power and their cost.

9. They Generalize Beyond the Training Data

A crucial property of neural networks is generalization—the ability to perform well on new, unseen data.

If a model merely memorized training examples, it would fail in real-world situations. Instead, through optimization and regularization techniques, neural networks learn underlying patterns that extend beyond specific cases.

Balancing underfitting and overfitting is central. Too simple a model cannot capture essential structure. Too complex a model may memorize noise. Methods such as dropout, early stopping, and data augmentation help improve generalization.

Generalization is where learning becomes meaningful. It transforms pattern recognition into prediction.

10. Neural Networks Have Transformed Language Processing

For decades, natural language processing relied heavily on hand-crafted rules and statistical models. Neural networks changed that landscape dramatically.

Recurrent neural networks and later transformer architectures enabled models to capture long-range dependencies in text. Attention mechanisms allowed systems to weigh the importance of different words in context.

These advances made possible high-quality machine translation, text summarization, question answering, and conversational agents. Language models trained on vast corpora can generate coherent paragraphs, answer complex queries, and even compose poetry.

Behind this fluency lies probability distributions over sequences of tokens—mathematical structures shaped by neural learning.

11. They Are Vulnerable to Adversarial Examples

Despite their power, neural networks can be surprisingly fragile. Small, carefully crafted perturbations to input data—imperceptible to humans—can cause dramatic misclassifications. These are known as adversarial examples.

For instance, adding tiny noise to an image may cause a network to label it incorrectly with high confidence. This vulnerability reveals that neural networks sometimes rely on subtle patterns not aligned with human perception.

Understanding and mitigating adversarial attacks is crucial, especially in safety-critical applications such as autonomous driving or medical diagnostics.

This fragility reminds us that neural networks are powerful but not infallible.

12. Interpretability Remains a Major Challenge

Neural networks are often described as “black boxes.” While we understand the mathematics of their operations, interpreting exactly why a specific decision was made can be difficult.

Researchers are developing methods to visualize activations, analyze feature importance, and probe internal representations. Techniques such as saliency maps and attention visualization provide partial insight.

Interpretability is not merely academic. In fields like healthcare, finance, and law, understanding reasoning processes is essential for trust and accountability.

The quest to open the black box continues, blending mathematics, visualization, and philosophy.

13. Neural Networks Contribute to Scientific Discovery

Beyond commercial applications, neural networks are increasingly used in scientific research. They assist in analyzing astronomical data, predicting protein structures, modeling climate systems, and accelerating particle physics experiments.

In some cases, neural networks act as surrogate models, approximating complex physical simulations at a fraction of computational cost. In others, they help detect rare events hidden in massive datasets.

The relationship between physics and neural networks is especially fascinating. Neural methods are used to solve differential equations, analyze quantum systems, and even assist in theoretical exploration.

Here, neural networks become tools not just for technology, but for expanding human knowledge itself.

14. They Raise Profound Ethical Questions

With great capability comes responsibility. Neural networks influence information flow, hiring decisions, loan approvals, and surveillance systems.

Bias in training data can propagate into biased predictions. Privacy concerns arise when models are trained on personal information. The automation of tasks affects employment and social structures.

Ethical AI research seeks fairness, transparency, accountability, and robustness. Addressing these issues requires interdisciplinary collaboration, involving computer scientists, ethicists, policymakers, and communities.

Neural networks are not inherently good or bad. Their impact depends on how they are designed, deployed, and governed.

15. Neural Networks Challenge Our Understanding of Intelligence

Perhaps the most incredible fact about neural networks is philosophical. They challenge our conception of intelligence.

For centuries, intelligence was associated with symbolic reasoning and rule-based logic. Neural networks show that complex behaviors can emerge from distributed, statistical learning processes.

They do not “understand” in the human sense. They do not possess consciousness or self-awareness. Yet they can perform tasks once thought uniquely human—recognizing speech, generating language, creating art-like images.

This raises profound questions. What is understanding? What is creativity? Can intelligence exist without awareness?

Neural networks force us to confront these questions with humility.

The Ongoing Journey of Neural Intelligence

Neural networks are not static achievements; they are evolving systems within an evolving field. Researchers continue to develop new architectures, training strategies, and theoretical frameworks. Hybrid models combine neural networks with symbolic reasoning. Efforts toward energy-efficient AI aim to reduce environmental impact. Advances in neuromorphic computing attempt to build hardware inspired more closely by biological brains.

The story of neural networks is a story of convergence—biology inspiring computation, mathematics shaping learning, hardware enabling scale, and society grappling with consequences.

They are tools, but they are also mirrors. In building systems that learn, we learn more about learning itself. In modeling intelligence, we reflect on our own cognition.

Neural networks demonstrate that complexity can arise from simplicity, that patterns can be extracted from noise, and that knowledge can emerge from data through structured adaptation.

They are electric dreams encoded in mathematics—networks of numbers that, when arranged and trained, reveal astonishing capabilities. And as we continue to explore their potential, we are not merely advancing technology. We are participating in one of the most profound scientific journeys of our time—the quest to understand and recreate intelligence itself.