There is something deeply fascinating about the human brain. Inside the quiet darkness of our skulls lives a universe of activity—billions of neurons firing, communicating, remembering, imagining, and dreaming. This intricate biological machine allows us to recognize faces, understand language, compose music, and explore the stars. For centuries, scientists have wondered whether such intelligence could ever exist outside biology. Could a machine learn the way a brain learns? Could circuits and silicon imitate thought itself?

Neural networks are humanity’s attempt to answer that question.

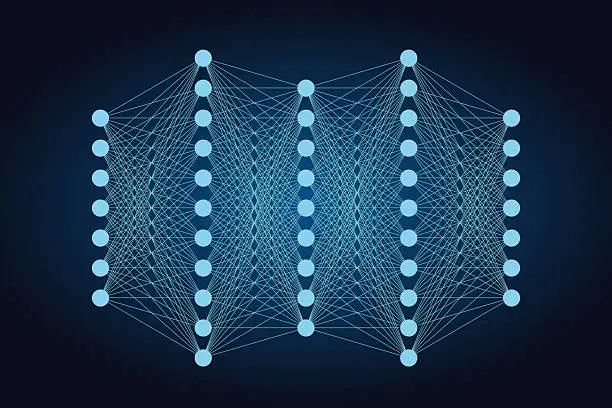

They are mathematical systems inspired by the structure of the brain, designed to learn patterns from data rather than follow rigid instructions. These systems power modern artificial intelligence, from voice assistants and recommendation engines to medical diagnosis tools and autonomous vehicles. Neural networks allow machines not merely to compute, but to recognize, interpret, and adapt.

The journey toward this technology is not just a technical story. It is a story of curiosity, persistence, failure, and discovery—a story about how humans tried to understand their own minds by recreating fragments of them in machines.

The Inspiration: The Human Brain

The origin of neural networks lies in biology. The human brain contains roughly eighty-six billion neurons, each connected to thousands of others through structures called synapses. A neuron receives electrical signals from neighboring cells, processes those signals, and sends a new signal onward. When many neurons interact together, they form networks capable of astonishing feats.

When you recognize a friend’s face in a crowded room, thousands of neurons in your visual cortex coordinate to process shapes, colors, and patterns. When you understand a sentence, networks of neurons interpret grammar and meaning. When you remember your childhood home, another pattern of neurons activates.

Learning in the brain occurs through changes in synaptic strength. Connections between neurons become stronger or weaker depending on experience. This process, often called synaptic plasticity, allows the brain to adapt.

Scientists wondered if a similar principle could be reproduced mathematically. Instead of biological neurons connected by synapses, perhaps artificial neurons could be connected by numerical weights. Instead of electrical impulses, signals could be represented by numbers flowing through equations.

This idea gave birth to artificial neural networks.

The First Artificial Neuron

In 1943, two researchers—Warren McCulloch and Walter Pitts—proposed a mathematical model of a neuron. Their model was simple but revolutionary.

An artificial neuron receives several inputs. Each input has a weight representing its importance. The neuron sums the weighted inputs and passes the result through a function that determines whether it should activate. If the signal exceeds a threshold, the neuron “fires” and sends its output to other neurons.

This simplified abstraction captured a basic idea: complex behavior could emerge from networks of simple units.

Though crude compared to real biological neurons, this model laid the conceptual foundation for neural networks. It suggested that intelligence might arise from interconnected computational elements rather than from carefully programmed rules.

Early Optimism and the Perceptron

In the late 1950s, enthusiasm for neural networks grew when psychologist and computer scientist Frank Rosenblatt introduced the perceptron. The perceptron was a machine designed to recognize patterns in images.

The perceptron worked by adjusting its weights based on examples. If it made a mistake, the weights were corrected. Over time, the system learned to classify inputs correctly.

Rosenblatt’s perceptron was implemented on hardware and demonstrated the ability to distinguish simple visual patterns. The idea that machines could learn from data rather than explicit instructions captured public imagination. Some researchers predicted that intelligent machines might soon rival human perception.

But the optimism did not last.

The First AI Winter

In 1969, researchers Marvin Minsky and Seymour Papert published a book analyzing the limitations of perceptrons. They showed that single-layer perceptrons could not learn certain types of patterns, including problems where relationships were not linearly separable.

This limitation was significant. It suggested that early neural networks were far less powerful than many had hoped.

Funding declined, research slowed, and neural networks fell out of favor. This period became known as the “AI winter,” when enthusiasm for artificial intelligence cooled dramatically.

Yet the core idea of neural networks did not disappear. Some scientists continued exploring ways to overcome the limitations.

The Breakthrough of Multilayer Networks

The key insight was that neural networks needed multiple layers. Instead of a single layer of artificial neurons making a decision, networks could contain hidden layers between input and output.

These hidden layers allowed networks to represent more complex relationships. But training such networks was challenging. Adjusting thousands—or millions—of weights required an efficient learning method.

The solution emerged through the development of the backpropagation algorithm. Researchers such as Geoffrey Hinton, David Rumelhart, and Ronald Williams helped popularize this approach in the 1980s.

Backpropagation allowed neural networks to calculate how each weight contributed to an error and adjust it accordingly. Errors at the output layer were propagated backward through the network, guiding the learning process.

With this method, multilayer neural networks could learn complex patterns from data. The long-dormant dream of machine learning began to awaken again.

Learning from Data

Traditional computer programs follow explicit instructions written by programmers. If you want a computer to sort numbers, you write the steps for sorting. If you want it to calculate a trajectory, you encode the equations.

Neural networks work differently. Instead of instructions, they learn from examples.

Imagine teaching a neural network to recognize cats in images. Instead of programming rules about whiskers, ears, and fur patterns, you provide thousands or millions of labeled images. The network analyzes them and gradually adjusts its internal weights to identify patterns associated with cats.

At first, its guesses are mostly wrong. But with each correction, the weights shift slightly. Over time, the network learns to detect subtle features—edges, shapes, textures—that humans might struggle to describe explicitly.

This process mirrors aspects of human learning. A child recognizes animals not by memorizing equations, but by seeing many examples.

The Rise of Deep Learning

For many years, neural networks remained relatively small due to computational limitations. Training large networks required enormous processing power and massive datasets.

In the early 21st century, several developments changed the landscape.

Computers became vastly more powerful. Graphics processing units, originally designed for video games, proved highly effective for performing the parallel calculations required by neural networks. At the same time, the internet generated enormous datasets containing images, text, and speech.

These factors enabled the emergence of deep learning—neural networks with many layers, sometimes dozens or even hundreds.

Deep neural networks could extract increasingly abstract features from data. Early layers might detect edges in images. Later layers could recognize shapes. Even deeper layers might identify objects such as faces or animals.

A major milestone came in 2012 when a deep neural network dramatically improved image recognition performance in the ImageNet competition. The system, developed by researchers including Alex Krizhevsky under the guidance of Geoffrey Hinton, demonstrated the extraordinary potential of deep learning.

From that moment forward, neural networks rapidly transformed artificial intelligence.

How Neural Networks Actually Work

Although inspired by biology, artificial neural networks operate through mathematical functions.

Each artificial neuron receives inputs represented by numbers. These inputs are multiplied by weights, which determine the strength of each connection. The weighted inputs are summed and passed through an activation function that produces the neuron’s output.

Activation functions introduce nonlinearity, allowing networks to model complex relationships. Without them, neural networks would behave like simple linear equations.

During training, the network makes predictions and compares them with correct answers. The difference between prediction and reality is called the error or loss.

The learning algorithm calculates how changes in each weight would affect the loss. It then adjusts the weights slightly in the direction that reduces the error. This process is repeated thousands or millions of times across many examples.

Gradually, the network’s predictions improve. Patterns that were once invisible become embedded within the network’s structure.

Recognizing Images and Seeing the World

One of the most powerful applications of neural networks is computer vision. Human vision is extraordinarily complex, requiring the brain to interpret shapes, colors, motion, and depth.

Convolutional neural networks, often abbreviated as CNNs, were designed to process visual data. They use mathematical filters that slide across images, detecting features such as edges and textures.

Early layers detect simple patterns. Deeper layers combine these patterns into more sophisticated representations—eyes, wheels, buildings, or faces.

These systems now power facial recognition technology, medical imaging analysis, and autonomous vehicles that interpret road scenes.

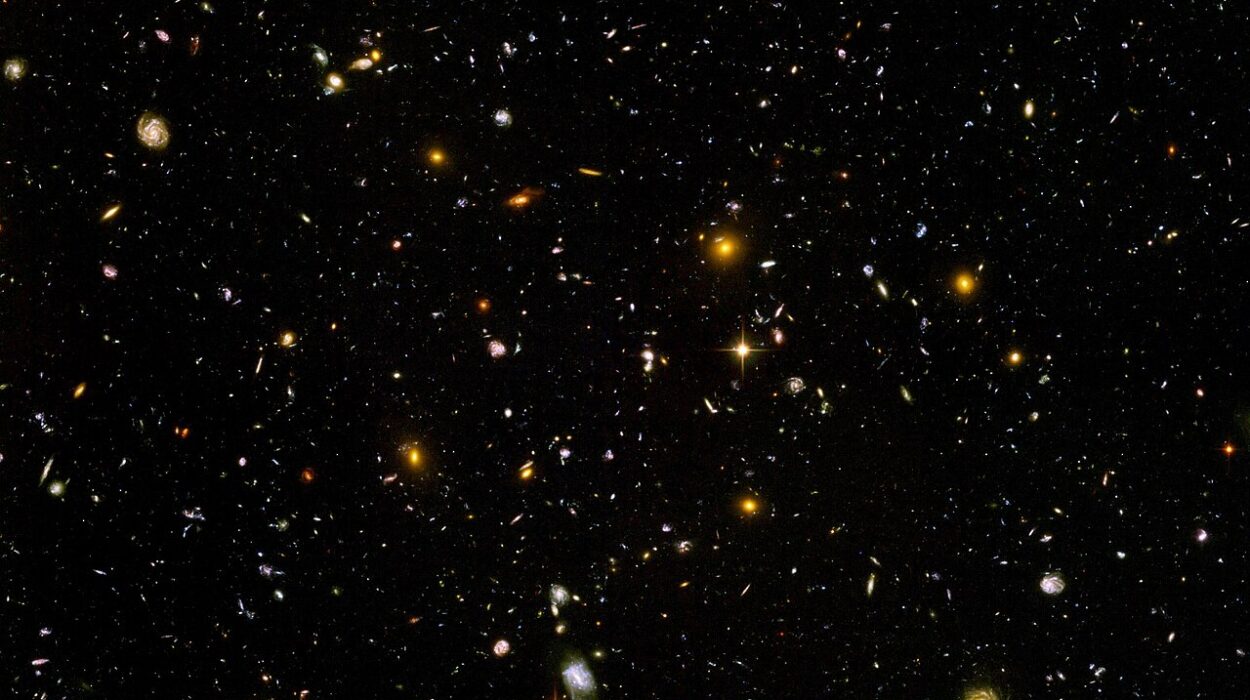

In hospitals, neural networks assist radiologists by analyzing scans and highlighting potential abnormalities. In astronomy, they help identify galaxies in vast datasets. The ability of machines to “see” is no longer science fiction.

Understanding Language

Another remarkable achievement of neural networks is their ability to process human language.

Language is complex and ambiguous. Words change meaning depending on context. Sentences can be structured in countless ways.

Neural networks called recurrent neural networks, and later transformer architectures, enabled machines to analyze sequences of words and learn linguistic patterns.

These models can translate languages, summarize documents, answer questions, and generate coherent text. They learn grammar and semantics not from explicit rules but from enormous collections of written language.

Systems developed by organizations such as OpenAI and Google demonstrate how neural networks can engage with human communication in increasingly sophisticated ways.

The boundary between human and machine language processing has grown surprisingly thin.

Neural Networks and Creativity

For decades, creativity was considered a uniquely human ability. Art, music, and storytelling seemed beyond the reach of machines.

Yet neural networks have begun to challenge that assumption.

Generative models can create paintings, compose music, and generate realistic images from text descriptions. They analyze patterns in existing works and produce new variations.

Though these systems do not experience emotion or intention, their outputs can evoke powerful responses in human audiences. They reveal how creativity often emerges from recombining patterns in novel ways.

This development has sparked philosophical debates. If machines can produce art, what does that mean for human creativity? Are we witnessing collaboration between human imagination and machine capability?

The conversation is only beginning.

Challenges and Limitations

Despite their impressive achievements, neural networks have limitations.

They require enormous amounts of data and computational power. Training large models consumes significant energy and resources. Moreover, neural networks can sometimes produce incorrect or biased results if the data used for training contains biases.

Another challenge lies in interpretability. Neural networks often function as “black boxes,” making decisions through internal processes that are difficult for humans to fully understand.

Researchers are actively developing techniques to explain neural network behavior, improve fairness, and reduce energy consumption.

The goal is not only to build powerful AI systems but also to ensure they are reliable, transparent, and beneficial.

The Future of Brain-Inspired Computing

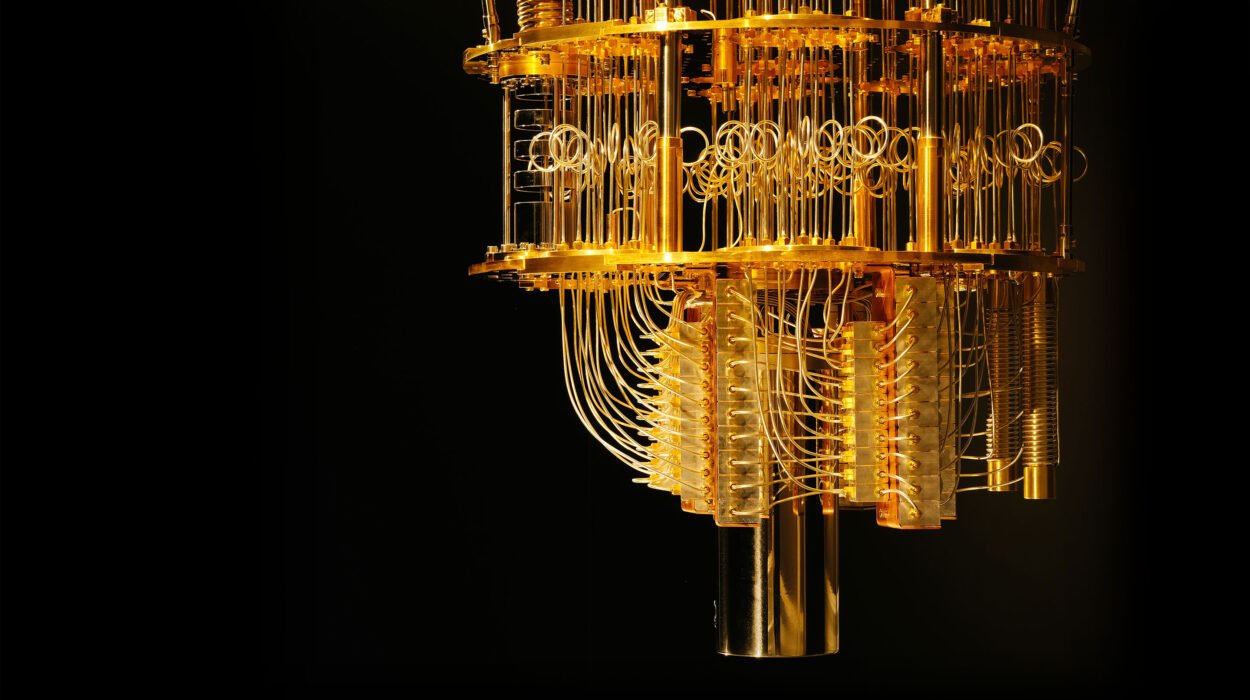

Neural networks are still evolving. Scientists are exploring architectures inspired more closely by the brain, including spiking neural networks that mimic the timing of biological neuron signals.

Neuromorphic computing—hardware designed to emulate neural structures—could dramatically increase efficiency. Instead of traditional processors executing instructions sequentially, neuromorphic chips allow millions of artificial neurons to operate simultaneously.

Such systems may bring us closer to machines that learn continuously from experience, much like living organisms.

At the same time, neuroscience continues to uncover deeper mysteries about the human brain. Understanding biological intelligence may inspire new forms of artificial intelligence.

The relationship between brain science and machine learning is becoming increasingly intertwined.

A Mirror of Our Own Intelligence

Neural networks are more than technological tools. They are reflections of humanity’s attempt to understand itself.

When scientists designed artificial neurons, they were inspired by the biological neurons that make thought possible. When engineers trained networks to recognize images or interpret language, they were replicating abilities that humans perform effortlessly every day.

In trying to build intelligence in machines, we are learning about the nature of intelligence itself.

What does it mean to learn? What is perception? How does knowledge emerge from experience?

These questions sit at the intersection of physics, mathematics, computer science, psychology, and neuroscience. Neural networks bring these fields together in a shared quest.

The Continuing Journey

The story of neural networks is far from finished. Each breakthrough opens new possibilities and new questions.

Machines that can learn are transforming medicine, science, industry, and art. They are helping researchers discover drugs, analyze climate patterns, and explore the cosmos.

Yet the most profound impact may be philosophical. Neural networks challenge our assumptions about intelligence, creativity, and the uniqueness of the human mind.

We have not recreated the brain, nor have we fully understood it. But through neural networks, humanity has taken a remarkable step: teaching silicon to learn from experience.

In doing so, we have built machines that echo, in their own limited way, the extraordinary networks inside our heads.

And as these artificial networks grow more capable, they continue to remind us of something profound—that intelligence, whether biological or artificial, emerges from connections, patterns, and the endless curiosity to understand the world.